Should you discretize continuous features for Machine Learning? 🤖

Let's say that you're working on a supervised Machine Learning problem, and you're deciding how to encode the features in your training data.

With a categorical feature, you might consider using one-hot encoding or ordinal encoding. But with a continuous numeric feature, you would normally pass that feature directly to your model. (Makes sense, right?)

However, one alternative strategy that is sometimes used with continuous features is to "discretize" or "bin" them into categorical features before passing them to the model.

First, I'll show you how to do this in scikit-learn. Then, I'll explain whether I think it's a good idea!

How to discretize in scikit-learn

In scikit-learn, we can discretize using the KBinsDiscretizer class:

When creating an instance of KBinsDiscretizer, you define the number of bins, the binning strategy, and the method used to encode the result:

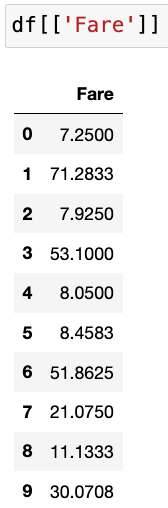

As an example, here's a numeric feature from the famous Titanic dataset:

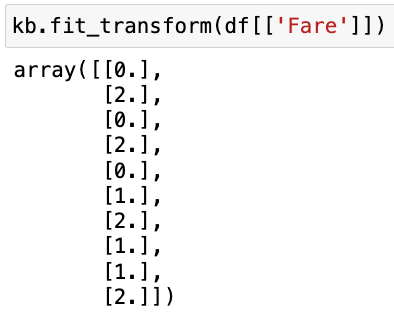

And here's the output when we use KBinsDiscretizer to transform that feature:

Because we specified 3 bins, every sample has been assigned to bin 0 or 1 or 2. The smallest values were assigned to bin 0, the largest values were assigned to bin 2, and the values in between were assigned to bin 1.

Thus, we've taken a continuous numeric feature and encoded it as an ordinal feature (meaning an ordered categorical feature), and this ordinal feature could be passed to the model in place of the numeric feature.

Is discretization a good idea?

Now that you know how to discretize, the obvious follow-up question is: Should you discretize your continuous features?

Theoretically, discretization can benefit linear models by helping them to learn non-linear trends. However, my general recommendation is to not use discretization, for three main reasons:

- Discretization removes all nuance from the data, which makes it harder for a model to learn the actual trends that are present in the data.

- Discretization reduces the variation in the data, which makes it easier to find trends that don't actually exist.

- Any possible benefits of discretization are highly dependent on the parameters used with KBinsDiscretizer. Making those decisions by hand creates a risk of overfitting the training data, and making those decisions during a tuning process adds both complexity and processing time. As such, neither option is attractive to me!

For all of those reasons, I don't recommend discretizing your continuous features unless you can demonstrate, through a proper model evaluation process, that it's providing a meaningful benefit to your model.

Resources

🔗 Discretization in the scikit-learn User Guide

🔗 Discretize Predictors as a Last Resort from Feature Engineering and Selection (section 6.2.2)

P.S. Want to get better at Machine Learning? Check out my course, Master Machine Learning with scikit-learn!